The Bible has stepped in to help translate from and to lesser used languages, says S.Ananthanarayanan.

The Old Testament has it that soon after the Flood, a united people who spoke in one language were fast building a tower, the legendary tower of Babel, which would reach high into the sky. God wished to stop the progress and He sent down different languages. This cut communications among the workers and the project came to a standstill. And language has long been the barrier in human enterprise.

Technology, with software that readily translates text from one language to another, seems at last to be connecting different languages. On the Internet and even cell phones, this happens almost seamlessly, so that persons using different languages do not even notice that the other person is using another language. The best translation programs, however, need large resources of specifically prepared text matter in the different languages to be able to bring this about. These resources are available with the major languages, like English, French, German, Spanish, Chinese, and also a great many others, but not with lesser used languages, like Faroese, spoken in the Faroe Islands, between Norway and Iceland, or Gallican, spoken by a community in north-western Spain, or Akawaio, a Caribbean language, Aukan spoken by a group in Surinam or Cakchiquel, a Mayan dialect or even some Indian languages. Technology is hence not able to connect speakers of these languages with the vast academic and commercial material now on tap in other languages.

A team working in the University of Copenhagen, however, hopes to set this right with the help of the Bible, which contains sizeable text that has been translated into almost every language. Prof Anders Søgaard, with Seljko Agic and Dirk Hovy, at the Centre for Language Technology at the university, have described their work, which uses existing translations of the Bible as a bridge to help lesser languages access the translation resources available for the major ones, in their paper, “If all you have is a bit of the Bible"presented at the annual meeting of the Association of Computational Linguistics, which was held at Beijing in July this year

The special preparation of the text matter, which translation software needs, consists basically of adding a label to identify words according to the role, like the part of speech, that the words play in the sentence. A human bilingue goes about translation by understanding meaning from text in one language and then expressing the same message in the second language. A machine process, on the other hand, has no understanding and only manipulates symbols. As there are different rules of gender, number, tense and word-order in different languages, a simple, word-by-word substitution would not work and there has to be an intermediate, abstract form, derived from the text in one language, before it can be converted into text in the second language.

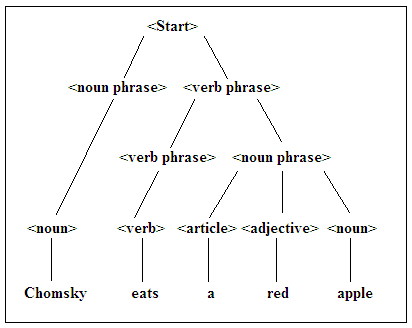

Important work on analysis of language, to understand how sentences are formed, was carried out by the language philosopher Noam Chomsky. The Chomsky hierarchy reduces a sentence in any language into its basic components, like nouns, adjectives, articles or verbs, arranged according to the rules that apply to that language. An instance is shown in the illustration of the analysis, from bottom, up, of the sentence, “Chomsky eats a red apple”. The sentence can be seen as deriving from the basic form of a <noun phrase> and a <verb phrase>. The <noun phrase> reduces to just the proper noun, ‘Chomsky’. The <verb phrase>, however, has structure, it first reduces to a <verb phrase> and a <noun phrase>. The second order <verb phrase> goes to the verb, ‘eats’, while the <noun phrase> has more structure, of an <article>, ‘a’, an <adjective>, ‘red’ and the <noun>, ‘apple’.

Now, with this starting structure of <noun phrase> and <verb phrase>, we can attempt expressing the sentence in French. The first

, which is a singular, masculine noun, goes just to ‘Chomsky’. In the , the verb, ‘eats’ translates to ‘mange’, which takes care that it is in the singular, and not ‘mangent’, which is plural, if there had been two persons going at that apple. The next has three components, like this: first is ‘une’, which is the article, ‘a’, in the singular, and femenine, as ‘apple’ in French is feminine, the second is ‘rouge’, for ‘red’ which, in this case, does not change form for masculine or feminine, and then ‘pomme’ for apple. But the rule in French is that the adjective goes after the noun that it qualifies. The sentence hence becomes, “Chomsky mange une pomme rouge’, with no thought for who Chomsky is or the colour of apples – but we do need two inputs – the equivalent words for ’Chomsky’, ‘eats’, ‘a’, ‘red’ and ‘apple’ and also that they are nouns, verb, article and adjective, so that we can apply the proper rules.

Data base

While we could find bare equivalent words in a dictionary, this would not serve the purpose in most cases, as many words have alternate meanings and are also used as different parts of speech in different contexts. For a computer system to be able to recognise these differences, what we need is mass of existing text matter, where ideally all the words that we need have been used, in different contexts, and a label has been attached to each word to show the nature of the use of the words, that is to say, as what part of speech, like noun or verb, and also its context. Attaching such labels to words in a list is called ‘tagging’ and a collection of text that has the classifying data attached is called a ‘tagged corpus’. If such resources exist, they enable computers to rapidly extract the structure of text in one language and generate text in another language. Software builds on this basis with more analysis, like statistical data and the result is very efficient translation, ‘on the fly’. But the basis for the software to function is the existence of classified text collections which helps identify individual words and their role in a sentence or a group of sentences.

In the major languages these resources have been in creation since decades, but not with minor languages or those not in the mainstream. This is where the University of Copenhagen group has made use of the Bible, which consists of parallel text in all languages. As the text of the Bible follows rigorous translation, verse by verse, ‘word alignment’ of the versions in different languages leads to acquisition of ‘parts of speech taggers’ for the lesser language based on the existing tagging of a brace major languages. The Bible hence serves as a bridge for tagging resources to become available across languages

“The Bible has been translated into more than 1,500 languages……and the translations are extremely conservative, the verses have a completely uniform structure……we teach the machines to register what is translated and, find similarities between the annotated and unannotated texts so that we can produce exact computer models of 100 different languages,” says Anders Søgaard. The resources have been made available to other developers and researchers, he says, which should help create language technology for languages such as Swahili, Wolof and Xhosa that are spoken in Nigeria!

------------------------------------------------------------------------------------------