Water guards its secrets well. But nature’s method for learning has broken through to some of them, says S.Ananthanarayanan.

Water, on which all of life depends and which covers the larger part of the earth’s surface, is one of the least understood of substances. It is the best solvent known, it is neither acid nor alkaline, and it is uniquely suitable for carbon-based life and also for a host of industrial uses. But it has properties which are basically different from other substances, and are described, rightly, as anomalies.

Understanding these peculiarities would be possible if we did an ‘ab initio’ study of the forces between the atoms and molecules of water, using the rules of quantum mechanics, which is the mechanics of very small distances. Matter at very small dimensions behaves differently from matter in bulk and we now have methods to calculate how interactions at these dimensions should be.

The trouble is that the computations to get solutions of reasonable accuracy are time consuming, to the point of being impractical. Tobias Morawietz, Andreas Singraber, Christoph Dellago and Jörg Behler of the Chair for Theoretical Chemistry, Rurh University and the Faculty of Physics, University of Vienna, have used an alternative, a laboratory imitation of the way a natural system, like the brain, would approach a problem. They describe the exercise in their paper in the Proceedings of the National Academy of Sciences and report confirmation of the belief that it is a set of weak forces other than electric attraction or repulsion that lead to the peculiar properties of water.

The reason substances occupy volume is that their component parts, the molecules, are in agitation. As warmer substances are in a state of greater agitation, warmer things occupy more space, and things expand when warmed. Conversely, they contract when cooled.

Water in the liquid state also behaves in this way when it is reasonably warm. But when it is cooled to just about 4°C, it starts to expand on cooling, in place of contracting. This goes on till it freezes as ice, at which point it expands by a whole 9%. When ice has formed, ice behaves like a normal solid and contracts when cooled, till is exceedingly cold, at -203°C, when it starts expanding again.

A partial explanation for this anomaly has been that the manner in which the molecules of water arrange themselves when they cool below 4°C, or form ice, is not so close together as when they were warmer, or in the form of water. To explain the anomaly as ‘because of more space being taken’, of course, is only a re-statement of the anomaly and not an explanation. Better reasoning has been that in water, there are not only the electric attraction or repulsion between charged particles, but also weak forces, known as van der Waals forces, which arise from pairs of separated charges, charges in motion, etc, and come into play. Because of two hydrogen atoms connected to one oxygen atom, and the shape, in the form of a V, for stability, the water molecule is not symmetrical and also acts like a pair of charges. The interplay of the forces, and the specific ratio of the masses of the atoms in water result in the sum total of forces crossing a tipping point at about 4°C and again on freezing.

This conjecture has been based on computer aided simulations of actual forces at play, from the starting point of quantum mechanical computation of the forces arising from the atomic structure and arriving at the forces on the molecules of water in bulk. Such simulations, however, are feasible only to short times scales and small systems, the PNAS paper says, and are of restricted utility.

Neural networks

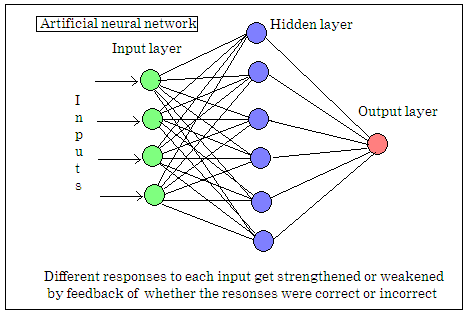

A more efficient, if not exact, but very powerful method of computation is that used by natural systems, like the brain. Unlike the digital computer, which manipulates actual numbers and needs to process each input in a programmed way, the neural network is one which arrives at the answer based on the \ random and simultaneous treatment of each input at different nodes. The working is not a method of computation, but a method of managing the random process, with successful operations becoming more likely to be repeated, and unsuccessful ones less likely.

A task like recognizing numbers written by hand, for instance, is the simplest possible for a human. But the same task for a computer involves taking in the inputs from the thousands of light sensitive cells in the retina, matching their distribution, one by one, based on yes/no comparison with a library of data and deciding what the image looks like as a result of millions of computations and comparisons. The task would be not only a huge computation job, it may even be neither possible nor reliable.

The brain, on the other hand, does not process the data in the same, serial way. The neurons, or brain cells that receive different inputs, first put out random outputs to other neurons, all at the same time. If the results, which are fed back, are successful, there are changes along the various sequences of cells, which make the same sequences more likely if the input is repeated. Or less likely, if the result is unsuccessful. Learning, in this way, takes place thousands of times, with different variations of the inputs that lead to the same successful outputs. The system thus gets equipped with a network of neuron paths, each of which will respond independently with a given net result for a number of nearly similar sets of inputs. The system is then able to generate nearly the same output for variations of a set of inputs, even when some were partly not encountered before, and with great speed. A digital computer, on the other hand would be hard put to tell even reasonably matching data as representing the same shape as it would need to make a serial comparison of very large numbers complete input sets with some reference library.

The same consideration would apply to teaching a computer to play chess, for instance. With 32 pieces and 64 squares, the theoretically possible moves are a very a large number, and so are the possible moves of the opponent. The number of possible positions after even a few moves is hence an impossibly large number and even a powerful computer that works in this ‘brute force’ method may miss the correct move in a given position. But the human mind takes in the whole board at once, ignores moves that it can recognize as unimportant and sees patterns that represent ‘strong’ positions, in a manner that it would be difficult to program a computer to do. The brain is able to do this because it does not work like the computer, but employs networks of nerve cells whose choices based on inputs have been programmed by experience and where outputs can be generated even with partial data

Artificial neural networks are computer programmes that simulate the brain by creating layers of nodes that receive inputs from one layer and send outputs to the next, with ‘learning’ taking place with software-based ‘strengthening’ of successful responses. The number of such nodes possible even in a large assemblage of computers cannot compare with the number of neurons in the brain, but such networks are able to deal with problems that would be out of the reach of the normal, serial computer programmes.

The authors of the PNAS paper hence devised algorithms to create a matrix of nodes that would receive inputs and whose connections would get strengthened when they arrived at valid results. Using this model, different forms of dynamics of water molecules could be tried out and the model was able to correctly work out the actual expansion/contraction behavior, the density change on freezing and even electrical properties of water. This was provided the weak, van der Waals forces were considered. The exercise hence succeeds in showing that it is the balance of van der Waals forces and the forces of asymmetric water molecules that act to bring about the celebrated anomalies.

------------------------------------------------------------------------------------------