The digital camera may be considered an accurate imitation of eyes of the animal world. Electronic sensors take the place of the light-sensitive cells in the eye, and the electrical signals are put together to form the image, in the same way as the animal brain recreates an image from the sensations within the eyes.

Simon Thiele, Kathrin Arzenbacher, Timo Gissibl, Harald Giessen and Alois M. Herkommer, from the research centre, SCoPE, at the University of Stuttgart, describe in the journal, Science Advances, a method of going further, and creating in the lab the detail that helps animal eyes have increased clarity at a central point of gaze, placed within a wider, surrounding field of view.

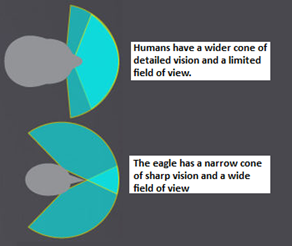

The eagle is the most striking example of the way the animal eye has adapted to unparalleled functional sensitivity. The eagle has vision as good as 20/4, or the ability to see from twenty feet what a human with normal eyesight can see at four feet. At four feet, most of us can see a grain of sand. The eagle can see this from twenty feet. And so can she see prey, the size of a field mouse, from a hundred meters.

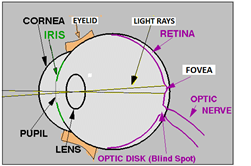

Eyes are not equally sensitive at all points in the field of view. The best resolution is right at the centre, a cone just two degrees wide, like the width of one’s thumb at arm’s length. This part of the image is formed at the centre of the retina, where there is a depression, the fovea, which has a great concentration of light sensitive cells. This region, about 1.5 mm wide, contributes nearly half the information load carried by the optic nerve and is packed with cones, the cells that are sensitive to colours and detail, rather than the rods, which are able to react in dim light.

The eagle has a more pronounced concentration of colour sensitive cells in the fovea and, of the narrow part of the field of view that is focussed at this region, the eagle can make out great detail, as if she were using a telephoto lens. The remaining part of the retina, however, is there to receive light from a wide field, so that the eagle can be warned of other birds or danger, even while she sees a selected, central region with the highest clarity, as if under a spotlight. The fovea of the eagle is even sensitive to the ultra violet and the eagle can make out the urine trails of rodents and track them down!

The digital camera creates images in much the same way as the animal eye. In place of the retina, the camera has an array of light-sensitive electronic units, which are rapidly scanned and the signal at each one of the units is conveyed to the pixels of the display screen. The ‘perception’ and the display are a series of digits that indicate if pixels in the display are illuminated or dark, and the distribution of pixels can be analysed with software. The broad similarity of the pattern of pixels in an image, to the patterns created by known objects, can thus be compared by computers and we have sensing arrangements that can recognise patterns or make out the letter in different kinds of writing and even recognise faces. The technology has advanced and we now have cameras, mounted on road vehicles, that are able to make out obstructions, even pedestrians on the road, and driving of the vehicles can be automated.

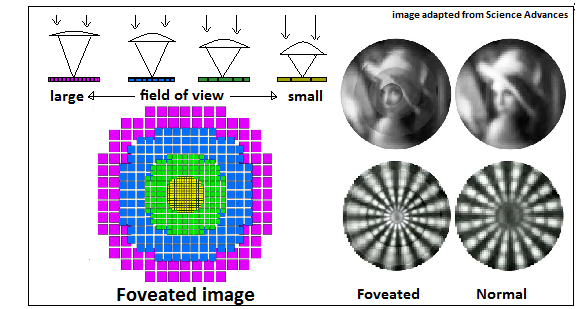

Simon Thiele and others at the University of Stuttgart note that this and other applications call for the same concentration of detail in the central part of an image, like there is in the animal eye. The application of the ‘self-driven vehicle’, for instance, would need to make out in detail the objects that lie directly in its path, rather than objects in the periphery of the field of view. Providing greater clarity of the image in the centre of the field would lead to economising processor resources and optimising the efficiency of the arrangement.

The electronic camera, unfortunately, works with light sensing units that are bulky, compared to the cells of the animal eye, and these do not allow the creation of a ‘fovea’, with a greater population of sensors in one region. A development in electronic cameras, however, is the use of wafer-thin, multi-aperture arrays, where a great many micro-lenses generate a number of images, which can be combined using software. This arrangement, where information collected over a wide area contributes to the final image, makes for good resolution with less bulk and also avoids the distortion that comes with large lenses. The same arrangement, however, calls for intense processing power and cannot provide highest acuity of images with the rapidity that applications require.

Foveated imaging

The University of Stuttgart group observes that the current efficiency with which micro-lenses can be built, using methods of 3D printing, can help create an arrangement of lenses which is to form an image that is well resolved in the central area and surrounded by a less well resolved region. The way it is done is to use 3D printing to cover a light-sensitive electronic chip with an array of sets of four lenses each. The lenses form images of the same size on the chip, but the lenses are of differing focal lengths and the images have different dimensions. The first lens creates an image of a large field of view, the second of a lesser field, the third of lesser still and the fourth is a telephoto lens that creates a magnified image of the centre of the object being viewed. The different sets of four images are then merged, by software, and the final image is one with high resolution of detail in the centre and reducing detail in concentric, larger circles.

Such a camera, guiding a self-driving vehicle, or for use with robots, drones or even in medical applications like endoscopes, would serve as an ‘eagle eye’, providing clear vision at the centre of the field and still cover a larger, surrounding field, with less detail. The point of focus can be shifted and adjusted, with the background as the guide, to seek or follow a moving object.

The lenses are just a hundredth or a tenth of a millimetre across and the whole sensor is just a few square millimetres. “The computer that the device is connected to could have an IP address and the device could be controlled and viewed over the Internet, by a smart phone”, says a press release put out by the University.

------------------------------------------------------------------------------------------