Seeing is believing, but it looks like we need to believe if we are to remember what we saw, says S.Ananthanarayanan.

Being able to recognise things, or faces, which we have seen before, is both to remember what it looked like and to know it is the same thing even if it were oriented differently the second time we see it. Automating the act of making out the object, or pattern recognition by machines, is hard enough. To be able to make it out even when it looks different, like humans can, is a puzzle that the computer world is yet to crack.

Understanding how the human eyes and brain can remember and recognise the great variety of objects that they can would help computer scientists find ways to improve the performance of machines. While there has been some progress towards this understanding, Mark W. Schurgin and Jonathan I. Flombaum, Department of Psychological and Brain Sciences, Johns Hopkins University, in their paper in the Journal of Experimental Psychology, bring out yet another aspect of the act of recognition of things briefly seen, by humans. With the help of a remarkably simple experiment, they show that people who see a thing briefly on two closely separated occasions are more likely to remember if they believe it was the same thing that they saw the second time too.

One feature of remembering objects or people is that we remember better if we see them in motion. When an object moves across the field of vision, it presents more than one view of its three-dimensional self and the collection of images we receive creates in our brain a more rounded picture. We are then better equipped to recognise the object when we see it again, even from a different angle or position that we have not encountered. This is equally true when we see people, of course, and when people move, we also see a unique and personal method of movement, which can identify the person even if seen the next time around in poor lighting!

Seeing a moving object is again not a passive act, but one where we move our eyes, head and body. The movement of the eyes is to bring an object that we see on the periphery, to the centre of our field of view. And these movements, along with the movement of the object itself, lead to muscular action being associated with the images, several of which, from different angles, get imprinted in our brain. One known part of the process of creating a memory, the John Hopkins paper says, is a ‘temporal association rule’. This is to say that a short encounter with an object, maybe a few seconds, or less, can be considered as creating a series of independent images, one changing into the other, of the same object. The way the images change over the short exposure gives the brain bases to imagine what changes in the object to expect in a subsequent exposure, to help recognition.

The experiment of the John Hopkins duo examined how the brain selects images of an object in motion, which were formed in quick succession, to store as changing images of a specific object. While the association with the movements of the eyes, as an object was seen in motion, would help identify all images as of the same object, there is an understanding that the brain uses its past encounters, or notions, known as ‘core knowledge,’ about the world, to make out which of the images that it sees are of the same object. One part of this knowledge is the way things move, which helps us identify the correct images of objects in motion. And the John Hopkins experiment set out to test this idea.

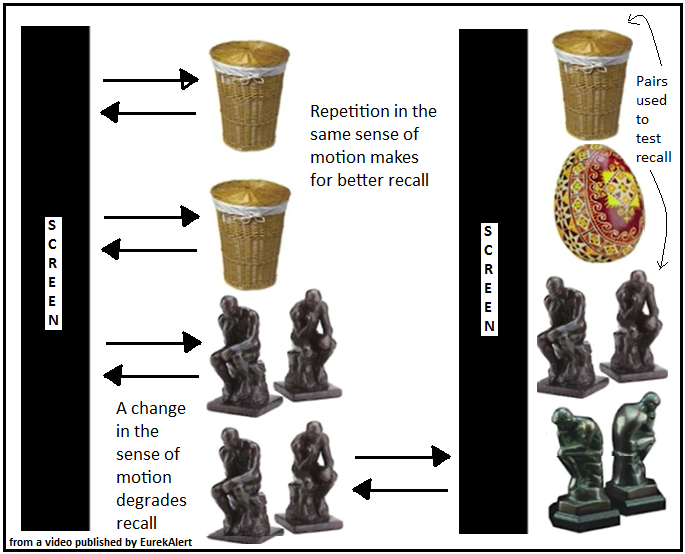

The experiment consisted of flashing a pair of images of an object, one after the other, in front of an observer. How well the observer recalled the object was then tested, to see if she could tell the difference between an object she had been shown and another that looked a lot like it. The effect of the experience of the observer, on how well she stored the two images of each object that she was shown, was tested through a variation in the way the two images of each object were shown. The way the objects were briefly displayed was by showing them to be darting out from behind a screen and then back to be hidden again, and doing this twice. In half the cases, however, the second appearance of the object was not from behind the same screen, but from behind another screen on the other side of the display, as shown in the picture.

The result of the trials was that objects shown both times from the same side of the display were remembered some 20% better. And the conclusion drawn is that both the images were stored as relevant images, to help recall, when the brain could expect that the object emerging from behind the same screen was the same object as had been seen before. When the object emerged from different sides, however, they were more likely some different objects and the two images were not stored together. The object was then less effectively recalled. "Your brain has certain automatic rules for how it expects things in the world to behave. It turns out, these rules affect your memory for what you see," says Mark Schurgin, graduate student at the University.

The image that physically appears before both the eye and a camera screen is physically only a collection of illumination values of arrays of nerve cells, pixels, or light sensing devices. It is the brain, or the pattern recognising software, that needs to make sense of the raw data. While pixel by pixel comparison against a recorded template could work only if the image shown is the same as what is in memory, machine systems employ different approaches to extract the essential features from the original, to look for in new images which may be not the same, but similar. And then, computers are programmed for ‘machine learning’, or to improve the process that they use for recognition, as and when they encounter more instances of images, and the feedback of how well they identified them.

Image processing by machines is growing in importance, with the development of driverless cars, automatic surveillance systems, robots to deal with production lines and for security of banking transactions. The present work by Schurgin and Flombaum could help program machine learning systems that derive value from images in motion, to select, based on past encounters, the images that need to be stored and from which it would be useful to learn.

------------------------------------------------------------------------------------------