Calculus, the master tool-kit of science, did not get here all at once, says S.Ananthanarayanan.

Calculus is a mathematical technique, taught in high school and largely mastered by the time a science student graduates, and it is now the basis and foundation of the physical sciences and technology. The present form of calculus, however, has come about with the work of many, since the 17th Century, when Isaac Newton sowed its seeds, and the path by which it got here shadows the explosion in science since then

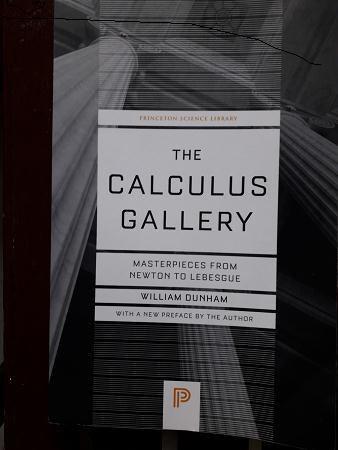

The Princeton University Press has brought out a new edition of The Calculus Gallery, a 2005 masterpiece by William Dunham, from Bryn Mawr College in Pennsylvania. The author introduces the major contributors to the growth of calculus, and, like the history of art can be described by selected works of the masters through the ages, he explains each mathematician with classic pieces of the mathematician’s work, which refined or grew out of calculus, all the way till the 20th Century.

As Dunham says in a first ‘interlude’ in the book, where he reviews the progress in the first century of calculus, calculus marked the transition, in perspective, from the geometric to the analytic. This is to say that the study of shapes and surfaces, even the path of the planets in their orbits, which were studied on the basis of Euclid, now moved to the abstraction of algebraic expressions. The paths of the planets had long been considered to be perfect circles, and Kepler, who showed they were ellipses, described the changing speed of the planet by areas swept out by the line from the sun to the planet. Galileo had studied the motion of a falling object, and arrived at a form of the equation of motion, but based on a geometric construction.

It was Isaac Newton who analysed the path of planets, or falling objects in terms of a relationship of position, speed and how they changed with time. And then the way the shape of a curve changed along its length, in terms of numbers and ratios. It was along this course of study that Newton developed the idea of the ‘fluxon’, or the instantaneous rate of change of a quantity that changed with time. Like the speed of a falling body, not over a period, but at any instant. The idea could be adapted to other mutually dependent values, like the length of the circumference of a circle or the area, traced by a radius, for different angles through which the radius turns. This problem had been approached by the Greeks using geometric methods. But the method of fluxons, permitted generation of a formula (the familiar, 2πr or πr2, for the radius going all the way round

With this method of dealing with smooth, or continuous changes, Newton was able to work out an analytical expression for the path of body that was in motion under a central attractive force, like a planet, and the data, and Kepler’s results, showed that the force must be one that reduces by the square of the distance – the law of Gravitation.

While Newton is thus credited with discovery of calculus, the same method, with greater mathematical finesse, had been developed at the same time by the German philosopher, polymath, Gottfried Wilhelm Leibniz. Leibniz’ versatility extended to history, jurisprudence, languages, theology, logic and diplomacy. When just 27, Dunham writes, Leibniz was elected to the Royal Society, for inventing a mechanical calculator that could perform all the operations of arithmetic!

The calculus that Leibniz developed was very much closer to calculus as we know it, to the extent of the notation, and was formally a method to examine the behavior of an mathematical function without the need to physically plot its values. And then, more clearly than Newton’s way, to do the reverse, to identify a function, given its behavior.

Dunham goes on, before he takes the first interlude, to explain and provide samples of the work of Newton and Leibniz, the Bernoulli brothers, and then Euler, which covered almost a century from the time of Newton. The work done during this period had first examined continuous processes as if they were step processes, which could be understood by conventional mathematics, and then by reducing the steps till they were ‘vanishingly small’.

This computational device, of considering things that were ‘infinitely small’, was new and its validity was suspect. The process consisted of taking in hand a ratio, the ratio of changes in a pair of variables, and reducing, proportionately the values of the numerator and denominator. As smaller and smaller changes are considered, the ratio approaches a final value, at which point, the numerator and denominator reduce to zero and vanish!

The approach invited attack and criticism. The Irish philosopher, George Berkley “ridiculed those scientists who accused him of proceeding on faith and not reason, yet themselves talked of infinitely small or vanishing quantities ,’ Dunham says in the interlude. Although Berkley did not dispute the results that calculus produced, he said the thinking was incorrect and “error may bring forth truth, but it cannot bring forth science.” Capable mathematicians did their best to firm the foundations of calculus, but Berkley held the field and “the 18th Century ended with the logical crisis still unresolved,” says Dunham.

Dunham then describes the work of Cauchy, Reimann, Liouville and Weierstrass, which did put calculus on robust conceptual bases, by the year 1873, nearly a century after Euler. The work, of “unprecedented care, taking pains to define their terms exactly and to prove results that had hitherto been accepted critically, succeeded in putting to rest the objections raised by Berkley and placed calculus on unassailable bases. And then, in the last quarter of the 19th Century, Dunham says, the work gave rise to questions that could not have been conceived before the same epochal work of the previous century. And in the final part of the book, Dunham describes Cantor, Volterra, Baire and Lebesgue.

Cantor went back to the basis of calculus, that ratios could be considered at the vanishingly small limit, and worked on the nature of fractional (called ‘real’) numbers. He then brought about a change in the direction of analysis, by considering the principles of a grand generalization, the Set Theory, and ‘small’ and ‘infinite’ sets. The ideas were followed through by Voterra, Baire and Lebesgue, who “pushed analysis ever further towards generality and abstraction” and left the subject, as Dunham says, revitalized and the platform from which the advances in the 20th Century were launched.

In many disciplines, Dunham notes, there is a tradition of studying illustrious predecessors, like Shakespeare, in Literature or Bach, in music. As this tradition is not often seen in mathematics, his book, Dunham says, is an assembly of masterpieces that reflect the excellence that has gone before, and appreciation of genius.

------------------------------------------------------------------------------------------ Do respond to : response@simplescience.in-------------------------------------------